Every year, somewhere near the end of the first day of UXinsight Festival, something shifts in the room. Faces have become familiar, the first talks have landed, and at some point you notice the room exhale.

Not because the content has become easier, often it hasn’t. But because people have remembered where they are. Among peers who get it. After ten editions, that moment is the thing we are most proud of. The feedback we receive is rarely about a specific talk. It is about the feeling of the room. A home. A safe space. A community.

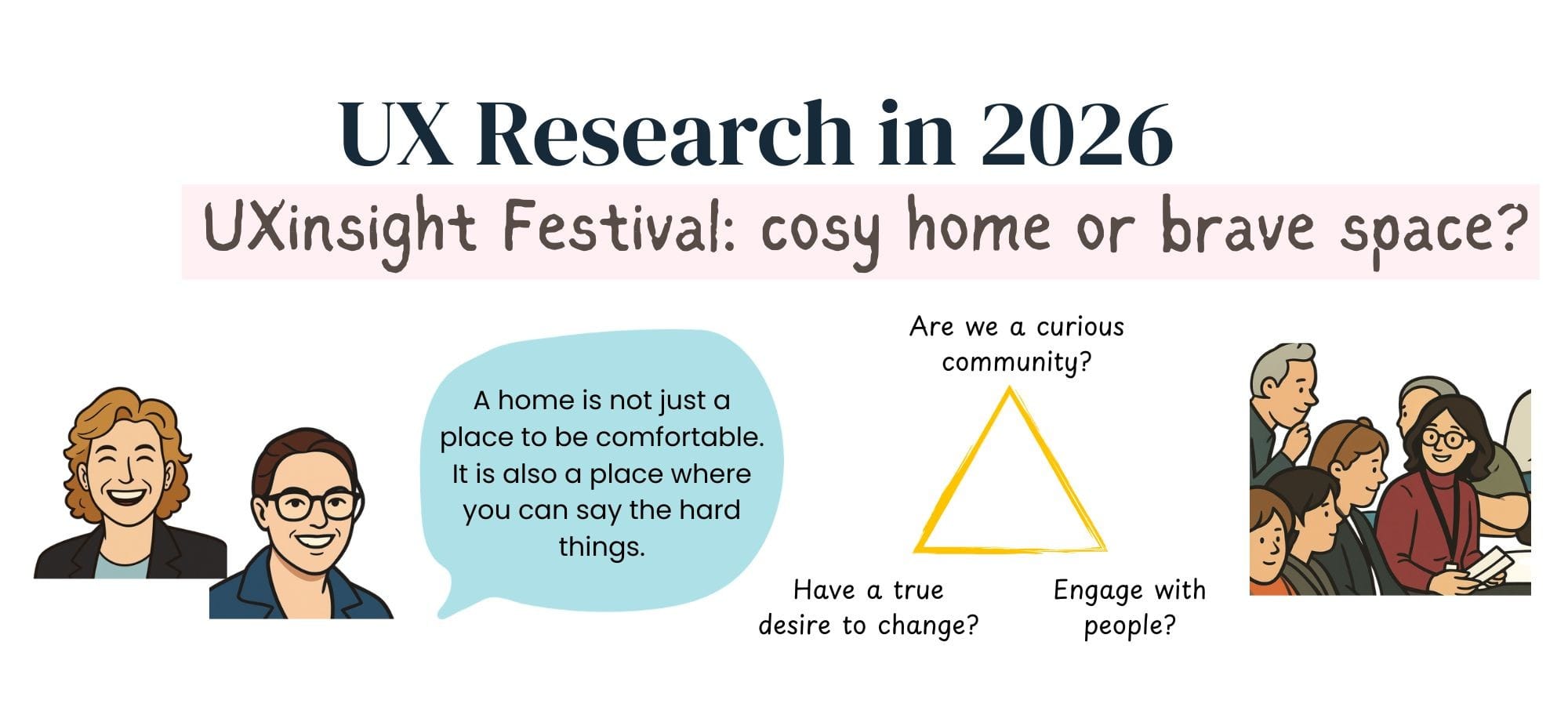

That sense of belonging is not just a nice side effect. It is what makes the harder conversations possible. Eva Becking and Gabriela Aguirrezabal put a name to it: the brave space. Not a space without friction, but a space where friction has somewhere to go.

This year, a question kept coming up in talks. Not planned, but threaded through almost every session: What is a UX researcher in 2026?

We didn’t leave with a clean answer. But definitely with a lot of food for thought.

1. The neutral researcher: a myth we built together

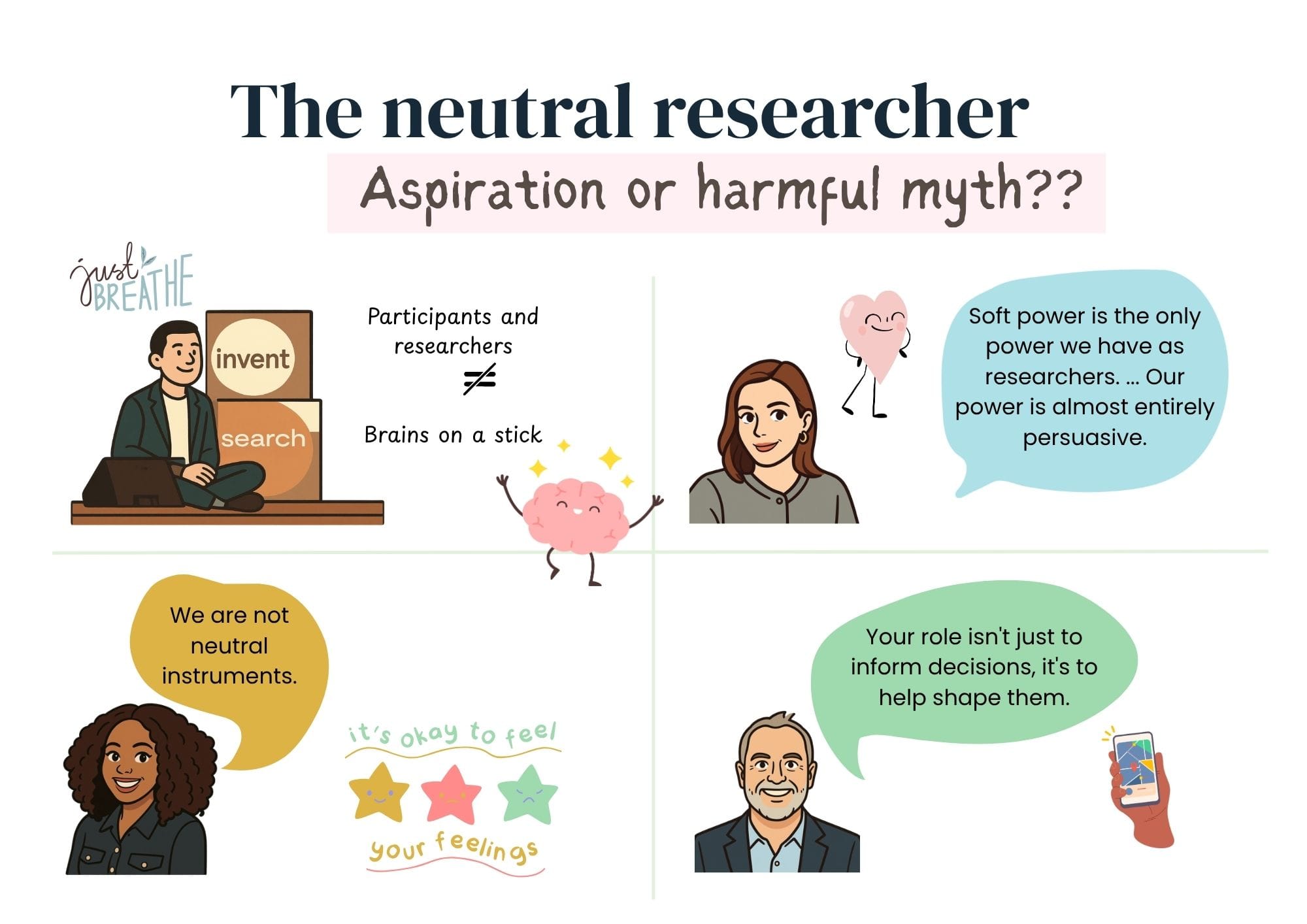

Ask a room full of UX researchers whether they have ever felt pressure to be more neutral, more composed, more objective than they actually felt in the moment. Almost every hand goes up. We know this because Anuoluwapo Adegboye asked the audience exactly that question.

We have spent years building a particular image of what a good researcher looks like. Objective. Precise. Someone who never brings too much of themselves into the room. It is an image so deeply embedded that many of us have stopped noticing it.

This year, several speakers referred to it.

Anuoluwapo did it with characteristic directness. Researchers are not instruments with bugs to fix, she said. We are practitioners with features to design around. Our instincts, our quirks, our reactions are always in the room. The question is not whether they shape the research. It is whether we are aware enough of them to use that influence well.

Yang Li came at it from a direction few had considered before. As both a UX researcher and a breathwork facilitator, he noticed that the same flattening we perform on ourselves, we also perform on our participants. We treat them as brains on sticks, targeting cognitive load and rational decision-making while ignoring the nervous system, the body, and the emotional state of the person sitting across from us. The neutral researcher and the rational participant are two sides of the same fiction.

Jason Giles, speaking not as a researcher but as the product leader researchers report to, was blunt. Staying completely neutral is not “rigor”. It is avoidance. What he needs beside him is not a “reporter of data” but a “strategic navigator” willing to say, with conviction, when to speed up and when to slow down.

And Ania Mastalerz, drawing on a childhood in diplomatic circles, made the structural case. Soft power is the only power researchers have. It requires showing up with confidence, with a point of view, and the willingness to campaign for what needs to happen.

Four talks. Four disciplines. The same underlying message.

But here is the tension worth sitting with. Abandoning neutrality as a professional identity is easier to declare at a Festival than to practice on a Monday morning. Many researchers are still embedded in organizations that reward the opposite. Where having opinions is read as bias. Where pushing back is read as not being a team player. Where the safest version of yourself is the quiet one with the well-structured report.

And perhaps the most honest thing said on this theme came not from a talk about influence or strategy, but from Eva Becking and Gabriela Aguirrezabal. In a talk explicitly about designing brave spaces, they admitted they are both conflict-avoidant. That the room they are teaching others to build is one they still find difficult to inhabit themselves. We can relate to that more than we would like to admit.

2. We use the word rigour constantly. What we rarely do is question it.

Every researcher in that room would say their work is rigorous. But ask what that means, and the answers start to diverge. Across the talks, rigor was cited repeatedly and just as often quietly contradicted. Not because anyone was being careless, but because the context in which research is practiced has shifted.

Tara Bosenick made a strong case for standards. The field is facing a quality crisis, driven by undertrained practitioners, unreflective adoption of AI, and the slow erosion of what it means to do research well.

Natasha den Dekker made a different argument. The metrics we consider the gold standard were built for a world of desktop browsers and linear user journeys. Applied to the fragmented, app-first reality most users now inhabit, they can produce data that is technically accurate yet practically misleading.

Vidhika Bansal went further, drawing on behavioral science to show that some of our most standard research questions ask participants to do something cognitively impossible. The data looks clean, but it doesn’t have any useful meaning.

And then Nidhi Jalwal and Serena Westra demonstrated that fast and light, when done with genuine care for the strength of the evidence, can be rigorous. The discomfort in the room was not about the quality of the argument. It was about how little it resembled what we were taught rigor looks like.

None of these speakers was being careless about quality. All of them were being rigorous; just about different things, in different contexts, toward different ends. The uncomfortable question is whether “rigour” has sometimes functioned as a shield in the research community. A word that signals authority without requiring agreement on what it actually means in practice.

3. Insights not landing is the field’s oldest wound

There is a question many researchers are afraid to ask too loudly: what actually happens to our work after we deliver it?

Domina Kiunsi faced the question and named something the room clearly recognized. Insights that were received warmly and then quietly forgotten. Findings that were known but never acted on. Insights that had shaped a product once, and were now just… lingering. She called them the three afterlives of insights. Most people in the room had lived at least one of them.

What made the session land so hard was not the diagnosis. It was the honesty about what that experience costs. The effort, the craft, the care that goes into research that disappears into an organization without a trace is not a minor frustration. Over time, it is a painful one.

Larry Becker and Ania Mastalerz both offered routes forward. Communication frameworks, stakeholder coalitions, accordion reports, nemawashi. Genuinely useful. And yet the accumulated weight of these sessions raised a question none of the speakers addressed directly: at what point does optimizing how we communicate research become an elaborate adaptation to a system that was never quite designed to receive it?

4. Democratisation: who we forgot to design for

Not long ago, the idea of research reaching further into organisations felt like progress. Product managers running interviews. Designers testing their own prototypes. Stakeholders closer to the user. The field championed it, built tools for it, and celebrated it.

Tara Bosenick put some numbers to what happened next. A 70% drop in UX job postings between 2022 and 2023. Researchers spending significant time correcting work done by people without the training to do it well. Boot camp graduates entering the field after as little as one week of education. And now, AI generating research-shaped outputs faster than any team of practitioners ever could.

She was not arguing against democratisation. She was naming its debt. The field celebrated the reach without taking responsibility for the infrastructure that would have made it work. The standards, the mentorship, and the organisational literacy to assess what good research actually looks like. We handed the tools out and assumed the practice would follow.

Nicola Young made the same argument from a direction the field rarely looks: inward. The conversation about inclusion in research almost always concerns participants, whose voices we capture, who are represented in our studies. Nicola asked a different question. Which researchers are we excluding? Diagnosed with repetitive strain injury in both hands, she found herself unable to use the standard tools of the profession. The entire infrastructure of research practice had been built around a particular physical capability, and she had never been considered in its design.

Both stories are about the same failure. Building for the assumed center. And hoping the rest will figure it out.

What makes this more pressing is that AI has arrived before the debt was settled. The volume of research-shaped activity in organisations is about to increase dramatically. The question of what separates a genuine insight from a confident-sounding hallucination is becoming more urgent, not less. And the field best placed to answer that question is the same one still figuring out how to defend its own quality standards.

5. AI is the mirror, not the threat

Every speaker at this year’s festival touched on AI. Some with urgency, some with skepticism, some with genuine curiosity. A few with all three at once.

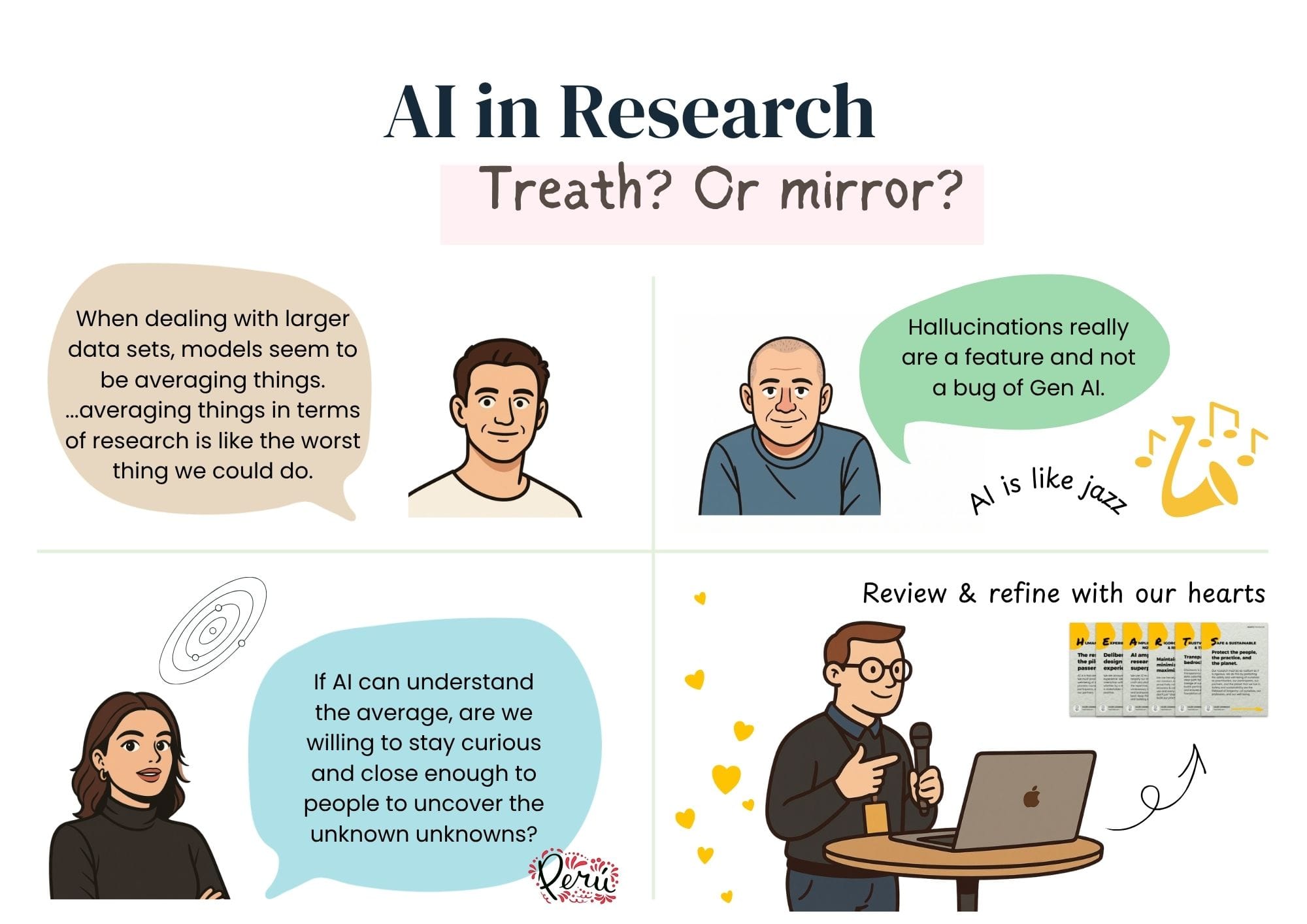

The dominant framing in the field positions AI as a threat to be managed or an opportunity to be seized. Both framings are understandable. Neither paints the full picture.

What we observed across fifteen talks is something more interesting. AI is a mirror. It is reflecting back on questions the field has been carrying for years. Questions about quality, about impact, about identity. AI did not create the question of what a UX researcher is. It did not invent the problem of insights not landing, or the tension between speed and rigor. It simply made those questions urgent in a way they had not been before.

Colman Walsh explained why LLMs are structurally incapable of achieving the accuracy researchers need. Jason Giles showed data suggesting AI outputs skew toward what the user wants to hear: optimistic, agreeable, unchallenging. Daniel Korczynski demonstrated that AI averages where research needs to differentiate. Domina Kiunsi warned that AI tools learning from organizational repositories will automatically propagate outdated insights at scale.

And Nia Rodriguez asked a question that should lead to some introspection: If AI can understand the average, are we willing to stay curious and close enough to people to uncover the unknown unknowns? Her fieldwork in Peru kept surfacing people and ecosystems that no dataset had captured. Informal actors filling gaps that the official system had never noticed, let alone designed for. The things that most often change strategy live precisely where AI cannot go. The things that surface when you are actually present, when you take the time to look around, talk to people, and stay long enough for the real picture to emerge.

None of this means AI is useless in research. Several speakers showed genuinely thoughtful applications. But the pattern across all of them was consistent. The value of AI in research is entirely dependent on the quality of the human judgment surrounding it. Which means the field’s most urgent project is not learning to use the tools. It is being honest about what human judgment in research actually consists of and whether the infrastructure exists to protect and develop it.

During the workshop on Monday, Kaleb Loosbrock offered one answer to that question. His HEARTS framework (Human-led, Experience-focused, Amplification not Automation, Rigorous & Responsible, Trustworthy & Transparent, Safe & Sustainable) is a practical way to audit whether an AI-assisted workflow still has a human at its center. Not a checklist. A set of questions worth asking honestly.

6. The question the Festival couldn’t answer and why that’s okay

What is a UX researcher in 2026?

Three days of UXinsight Festival did not offer a clean answer. It didn’t package the uncertainty into a reassuring framework. But it created a room where it felt safe to say, “We are not sure.” We are not sure our metrics are telling us the truth. We are not sure our insights are landing. We are not sure what neutrality even means anymore.

And to discover, together, that sitting with those questions is not a sign of a field in crisis. It is a sign of a field paying attention.

The brave space is not a place where everything gets resolved. It is a place where the right questions finally get asked. What you do with them in your organization, in your team, in your next project, is where the work actually begins.

The conversation continues. We hope you will be part of it.

Written with the support of Claude Sonnet 4.6